Half of social science research falls apart under scrutiny

With so many biased or corrupt experts and academics, you need to be more skeptical

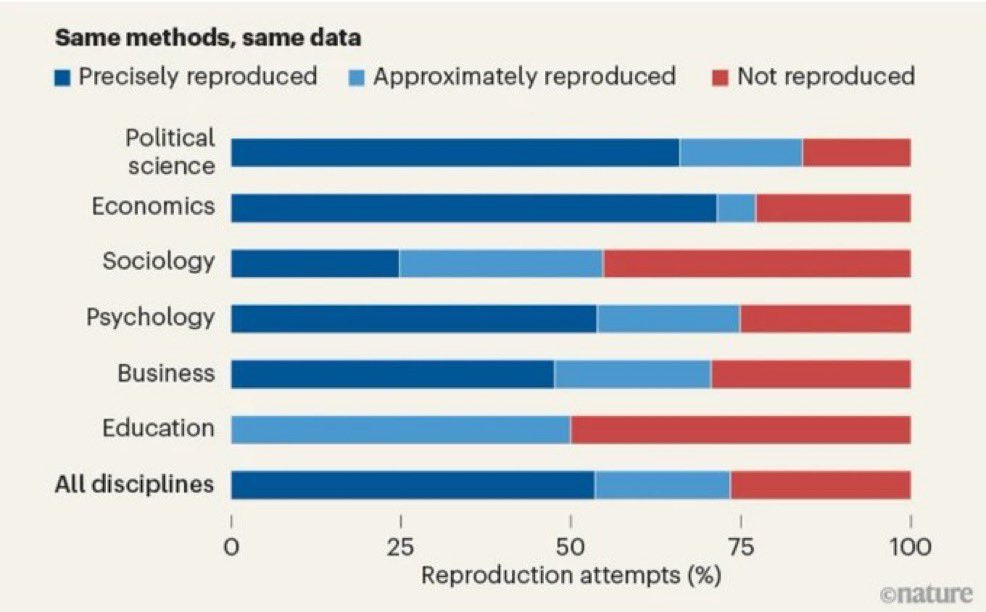

I previously wrote about how social science research is 90% ideologically uniform. A new Nature piece shows something else: a massive seven-year project examining 3,900 social-science papers found that researchers could replicate the results of only about half the studies they tested.

The initiative, called SCORE, funded by DARPA and involving 865 researchers across 62 journals, tested three things: reproducibility, robustness, and replicability. The results at each step were unflattering. Of 600 papers where researchers attempted to reproduce the original data, only 145 contained enough detail to even try, and of those, just 53% matched precisely. Among the subset of studies that were fully replicated from scratch, only about 49% held up with statistical significance.

This isn’t a surprise to anyone who’s been paying attention and seen odd stories shared online. I mentioned the replication crisis briefly in my last piece, and here it is in full color. The question is whether people are willing to connect the dots between the two stories, because they are deeply intertwined.

When a field is ideologically homogenous, and we established that social sciences lean left with a correlation so tight it’s essentially a monoculture, you lose the adversarial pressure that makes science work. A heterodox researcher asking uncomfortable questions, demanding better methodology, or refusing to accept a fashionable conclusion is exactly the immune system science needs. Remove that person through self-selection and you get a literature that feels like it’s settled but isn’t. As one Stanford meta-scientist put it, the SCORE results are “not surprising,” consistent with every smaller study before it. The field has known. It just hasn’t fixed it.

Part of the failure is lack of care: many papers simply don’t share enough data or detail for anyone to verify their work. Publish fast, publish positive, don’t invite scrutiny. But ideology and sloppiness compound. When peer reviewers share your priors, a weak study with the right conclusions will clear the bar. When your entire discipline does, the weak studies accumulate into a body of “evidence” that informs policy.

And that’s why this matters beyond the ivory tower. Research on immigration, education, criminal justice, housing, all of it flows downstream into real decisions affecting real people. If the underlying literature doesn’t replicate, the policy built on top of it ends up harming all of us.

There’s a sliver of hope: a separate analysis found that papers from 2022–23 reproduced at 85%, suggesting newer transparency norms are helping. But that progress doesn’t rehabilitate decades of literature already embedded in textbooks, policy briefs and what people believe when they talk about many topics online and in person. And if you sit and think about it for a moment, you realize the bias piece and the replication piece aren’t really two separate scandals. They’re both part of same one.

Germane: "The poison was never forced. It was offered gently, until you forgot it was poison at all." —Mark Twain

Boy, you are so right about this - the social sciences hold a lot of sway in higher education because they present themselves as having data-driven answers to everyone's problems. But because their subject is most often mutable, capricious people, these answers change all of the time; upper admin worships at the social science altar, but it's all just an over-funded shot in the dark. I guess that's my own bias coming through, but I've been teaching college for 40 years.....